해당 자료는 "모두를 위한 머신러닝/딥러닝 강의"를 보고 개인적으로 정리한 내용입니다.

hunkim.github.io

본 실습의 tensorflow는 1.x 버전입니다.

Non-normalized 값에 의해 예측값을 제대로 연산하지 못하는 경우

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

#tensorflow v1호환

import numpy as np

# 마지막 열 값이 Y

# X변수 중간에 큰 값이 중간에 섞여있음(Non-normalized)

xy = np.array([[828.659973, 833.450012, 908100, 828.349976, 831.659973],

[823.02002, 828.070007, 1828100, 821.655029, 828.070007],

[819.929993, 824.400024, 1438100, 818.97998, 824.159973],

[816, 820.958984, 1008100, 815.48999, 819.23999],

[819.359985, 823, 1188100, 818.469971, 818.97998],

[819, 823, 1198100, 816, 820.450012],

[811.700012, 815.25, 1098100, 809.780029, 813.669983],

[809.51001, 816.659973, 1398100, 804.539978, 809.559998]])

x_data = xy[:, 0:-1]

y_data = xy[:, [-1]]

# placeholders for a tensor that will be always fed.

X = tf.placeholder(tf.float32, shape=[None, 4])

Y = tf.placeholder(tf.float32, shape=[None, 1])

W = tf.Variable(tf.random_normal([4, 1]), name='weight')

b = tf.Variable(tf.random_normal([1]), name='bias')

hypothesis = tf.matmul(X, W) + b

cost = tf.reduce_mean(tf.square(hypothesis - Y))

# Minimize

optimizer = tf.train.GradientDescentOptimizer(learning_rate=1e-5)

train = optimizer.minimize(cost)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for step in range(2001):

cost_val, hy_val, _ = sess.run(

[cost, hypothesis, train], feed_dict={X: x_data, Y: y_data})

print(step, "Cost: ", cost_val, "\nPrediction:\n", hy_val) ML_Lab_07_1_1.ipynb

0.13MB

ML_Lab_07_1_1.ipynb

0.13MB

min-max scale를 이용해 Non-normalized를 Normalized로 변환하여 수행

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

#tensorflow v1호환

import numpy as np

def min_max_scaler(data):

numerator = data - np.min(data, 0)

denominator = np.max(data, 0) - np.min(data, 0)

# noise term prevents the zero division

return numerator / (denominator + 1e-7)

# 마지막 열 값이 Y

# X변수 중간에 큰 값이 중간에 섞여있음(Non-normalized)

xy = np.array([[828.659973, 833.450012, 908100, 828.349976, 831.659973],

[823.02002, 828.070007, 1828100, 821.655029, 828.070007],

[819.929993, 824.400024, 1438100, 818.97998, 824.159973],

[816, 820.958984, 1008100, 815.48999, 819.23999],

[819.359985, 823, 1188100, 818.469971, 818.97998],

[819, 823, 1198100, 816, 820.450012],

[811.700012, 815.25, 1098100, 809.780029, 813.669983],

[809.51001, 816.659973, 1398100, 804.539978, 809.559998]])

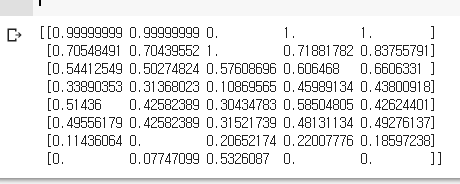

# 0 ~ 1의 값으로 변환하여 일반화

xy = min_max_scaler(xy)

print(xy)

x_data = xy[:, 0:-1]

y_data = xy[:, [-1]]

# placeholders for a tensor that will be always fed.

X = tf.placeholder(tf.float32, shape=[None, 4])

Y = tf.placeholder(tf.float32, shape=[None, 1])

W = tf.Variable(tf.random_normal([4, 1]), name='weight')

b = tf.Variable(tf.random_normal([1]), name='bias')

hypothesis = tf.matmul(X, W) + b

cost = tf.reduce_mean(tf.square(hypothesis - Y))

# Minimize

optimizer = tf.train.GradientDescentOptimizer(learning_rate=1e-5)

train = optimizer.minimize(cost)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for step in range(2001):

cost_val, hy_val, _ = sess.run(

[cost, hypothesis, train], feed_dict={X: x_data, Y: y_data})

print(step, "Cost: ", cost_val, "\nPrediction:\n", hy_val)

ML_Lab_07_1_2.ipynb

0.16MB

ML_Lab_07_1_2.ipynb

0.16MB

'도서,강의 요약 > 모두를 위한 머신러닝' 카테고리의 다른 글

| lec 07-2: Training/Testing 데이타 셋 (0) | 2020.06.20 |

|---|---|

| lec 07-1: 학습 rate, Overfitting, 그리고 일반화 (Regularization) (0) | 2020.06.15 |

| ML lab 06-2: TensorFlow로 Fancy Softmax Classification의 구현하기 (0) | 2020.06.11 |

| ML lab 06-1: TensorFlow로 Softmax Classification의 구현하기 (0) | 2020.06.03 |

| ML lec 6-2: Softmax classifier 의 cost함수 (0) | 2020.05.30 |